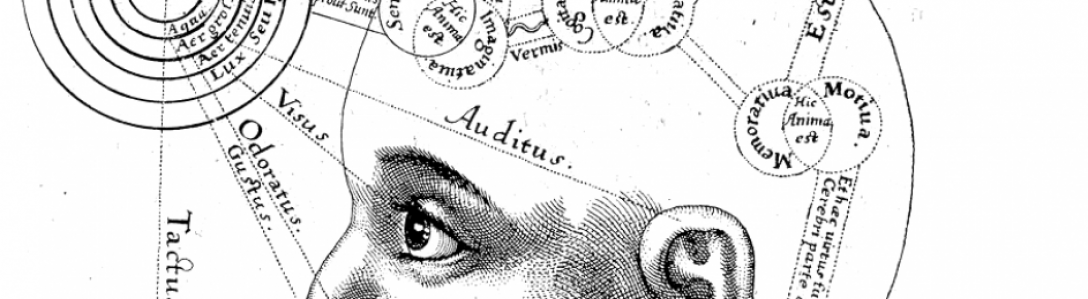

The computer metaphor, the idea that human behaviour, and more specifically the brain, can be usefully thought of as a computer, has hung around psychology’s neck for 50 years or more. A post at Aeon by Roger Epstein takes the metaphor to task, and I’d recommend it, though it’s some are finding it harder to let go.

Jeffrey Shalitt argues that the brain is a computer (for certain values of the word ‘computer’) slightly less carefully than one might want to when dealing with these slippery technological metaphors. Such analogies have come and gone (as the Aeon article notes) and one shouldn’t be too welded to them: they are there to guide our view of reality, they aren’t reality itself. If it’s not working well, or an alternative explanation fits just as well, we should abandon it. Epstein shows there’s more ways to interpret behaviour than just as a computer program. And Shalitt’s post shows so many of the dangers of the computer analogy.

Take for example, Shalitt’s error when going over “perhaps the single stupidest passage in Epstein’s article” (the hubris is incredible). Epstein uses the well-worn example that baseball players can be very good at catching fly balls, and that rather than conceptualise the fielder’s strategy as “run my brain’s subconscious calculus programs to identify the intercept point of the ball”, we can rather more elegantly identify their strategy as “keep moving in a way that keeps the ball in a constant visual relationship with respect to home plate and the surrounding scenery”. Shalitt points out proudly that this way of moving can be described as an algorithm, and imagines this is the same thing as saying the fielder is using an algorithm to catch the ball. (Humourously, Shalitt goes on to say that catching a fly ball is in fact extremely complicated for an algorithm to do, which only weakens the claim that we are running a vast, vast bank of computations in order to do something as commonplace as catch a ball, a task that a child is able to accomplish.)

This substitution of explanation for description is taken even further when Shalitt claims that we are indeed born containing ‘information’, because DNA is information (in base 4)! The replication of DNA involves transcription, like writing data to a thumb drive, and you can even get viruses infecting the program and causing corruptions. The analogy of information across computer and biology fits hand-in-glove.

But of course, the comparison is built into the concepts and language of both because the process of DNA encoding and transcription was discovered at the same time that electronics and computing industries were making great progress. Crick and Watson (in the research they stole from Rosalind Franklin) explicitly borrowed terms from these industries to describe the action of DNA (much as biologists in the 30’s used the metaphor of the fordist factory to describe the process of protein synthesis: the cell had raw materials, blueprints, an assembly line, etc.). They abstracted beyond the fact that these long protein strands are actually under very different material forces than computer data, to see the similarities, and sharpen their analysis in particular ways. But then to take these concepts and apply them retroactively is like the claiming the shape of the glove explains why the hand has five fingers.

The lesson in this is to be careful with one’s concepts. The computer analogy, like all analogies, is an abstraction which allows us to take the physical world and recompose it in our thoughts: as William James (1911/1917) puts it (p72):

“The substitution of concepts and their connections… for the immediate perceptual world thus widens enormously our mental panorama. With concepts, we can go in quest of the absent, meet the remote, bend our experience.”

This state of remove from reality is also the great weakness of abstraction. Ideas can float freely without any need to correspond to the material world, and unless they are brought back down to reality once more, they can develop a life of their own. For instance: Darwin, inspired by the 19th century free market, looked at the natural world, and saw competitors fighting for advantage or dying in the struggle (that’s not the only way of concieving of evolution of course). The idea that the natural world was a capitalist marketplace echoed back and further justified the marketplace: the market explained biology, and then biology naturalised the market.

Today, the computer metaphor has colonised psychological theory to such an extent that some are simply unable to understand behavioural phenomena outside of that narrow framwork and language. Computer storage is named ‘memory’ for its ability to store information for later retrieval, and then memory is redefined by this analogy as computer storage. Pointing out the problems to critics like Shalitt results in bafflement: what do you mean memory isn’t computer storage? It’s what memory means!

Unmooring an analogy from its basis in reality, forgetting that it is a guide to reality and not reality itself, is what James called ‘vicious intellectualism’, or the psychologist’ fallacy. Instead, James advised:

“Whenever we intellectualise a relatively pure experience, we ought to do so for the sake of redescending to the purer experience.”

That’s the 19th century way of telling people to stop being such nerds and engage with the world as they find it.

[…] They are part of a theoretical effort to explain how direct perception is possible. In other words, we should take care not to over-think our everyday interactions, substituting our abstractions for reality […]

LikeLike

[…] To return to cinematic matters, a key virtue in Michotte’s complex work of distilling abstract philosophy to experimental psychology was a readiness to relate this to prosaic human activity, including viewing artwork, photos, theatre and films (another habit shared with Gibson). His 1948 work, “The character of ‘reality’ of cinematographic projections” (reproduced in Thinès, Costall and Butterworth, 1991), is a classic often referenced in film theory, but barely known to western psychologists. As I mentioned earlier in this article, it is striking that orthodox psychology mounts little effort to relate itself to everyday human experience, and this fact is particularly clear when compared to efforts of the science to harmonize with harder sciences, such as biology and chemistry. This is not only a symptom of the general separation between art and science in western society (and the privileging of science in particular), but also an effect of cognitive psychology’s alienating way of explaining natural human behaviour as the result of putative, behind-the-scenes cogitation. […]

LikeLike